Built for the

Age of

Artificial Intelligence.

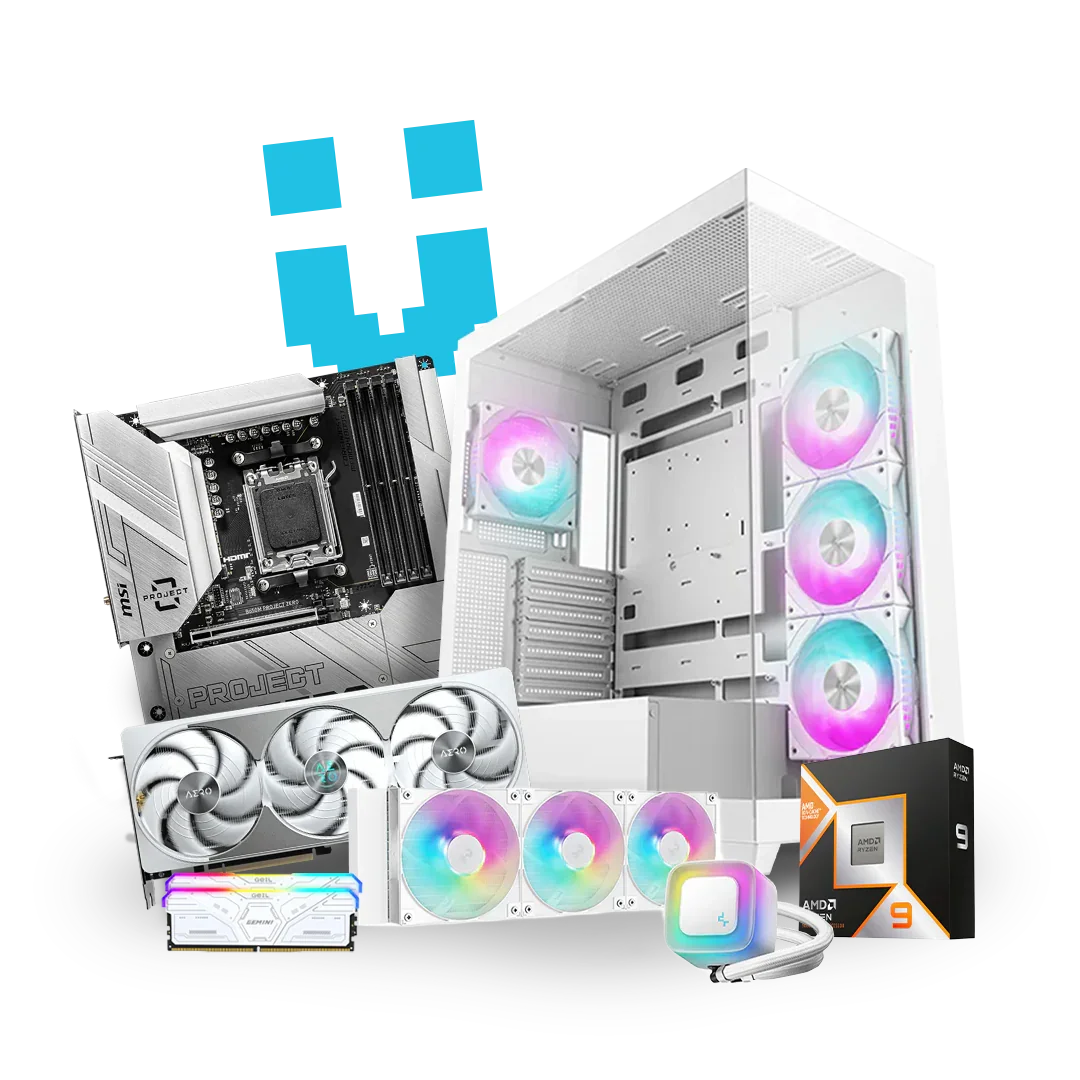

AMD Threadripper. Up to 64 cores. Up to 256GB DDR5. Up to 72GB GPU VRAM. POP!_OS pre-installed. These are not gaming PCs repurposed for AI — they are purpose-built AI machines, hand-assembled in South Africa.

Every Brutech Threadripper AI workstation ships with POP!_OS pre-installed — a Linux distribution purpose-built for performance computing with best-in-class NVIDIA driver support, CUDA out of the box, and none of the overhead of Windows standing between your model and your GPU.

The Brutech Threadripper range runs from the Darwin (24-core, RTX 5070 Ti) up to the flagship Einstein (64-core, RTX 5000 Blackwell 72GB, 256GB DDR5). All five machines share the same ASUS Pro WS TRX50-SAGE WIFI platform, Samsung Gen5 NVMe, Seagate IronWolf Pro mass storage, and a 1600W 80+ Gold PSU — built for 24/7 sustained compute, not bursts.

With Ollama and LM Studio running natively on POP!_OS, the Darwin and Edison turn your desk into a private inference endpoint. The 24-core Threadripper 9960X handles tokenisation, batching, and multi-session requests without blinking, while the RTX 5070 Ti or RTX 5080 GPU handles all tensor operations. No API keys. No rate limits. No data leaving your building.

The Newton steps up to the Threadripper 9970X (32 cores) and the NVIDIA RTX 5000 Blackwell with 48GB ECC VRAM — the headroom you need for serious fine-tuning runs using PyTorch, Hugging Face Transformers, and JAX. ECC memory prevents the silent bit-flip corruptions that can derail an overnight training run. 128GB of system DDR5 means your dataset preprocessing never stalls waiting for RAM.

The Hawking combines the 64-core Threadripper 9980X with the RTX 5000 Blackwell 48GB ECC — giving your MLOps team a machine that can handle data preprocessing, model serving, and vector database indexing simultaneously without contention. Run LangChain, Qdrant, Weaviate, or pgvector pipelines alongside your inference server on the same machine. POP!_OS keeps overhead minimal so every core and every GPU cycle goes to work, not to the OS.

The Einstein is the most capable AI workstation Brutech builds. The RTX 5000 Blackwell 72GB ECC gives you the VRAM to load frontier-scale models — 70B parameter models in FP16, 405B models in GGUF quantisation — entirely in GPU memory, with no offloading to system RAM. The 256GB DDR5 R-DIMM handles the largest datasets in memory. 64 Threadripper cores handle everything else. This is the machine for AI studios, research teams, and businesses building proprietary models on sensitive data that cannot leave the premises.

The Threadripper AI Range

Five builds. Every AI workload covered. All running POP!_OS. Enquire for pricing.

Ready to Build

Your AI Stack?

These machines are not off-the-shelf — they are configured for your specific workload. Tell us what you're building and we'll recommend the right Threadripper, confirm availability, and walk you through the build timeline.

- Which models and frameworks you're running

- Whether you need 48GB or 72GB GPU VRAM

- Dataset sizes and training run duration

- Any custom software environment requirements

- Whether you're a solo researcher, studio, or enterprise team

No obligation. No sales pressure.